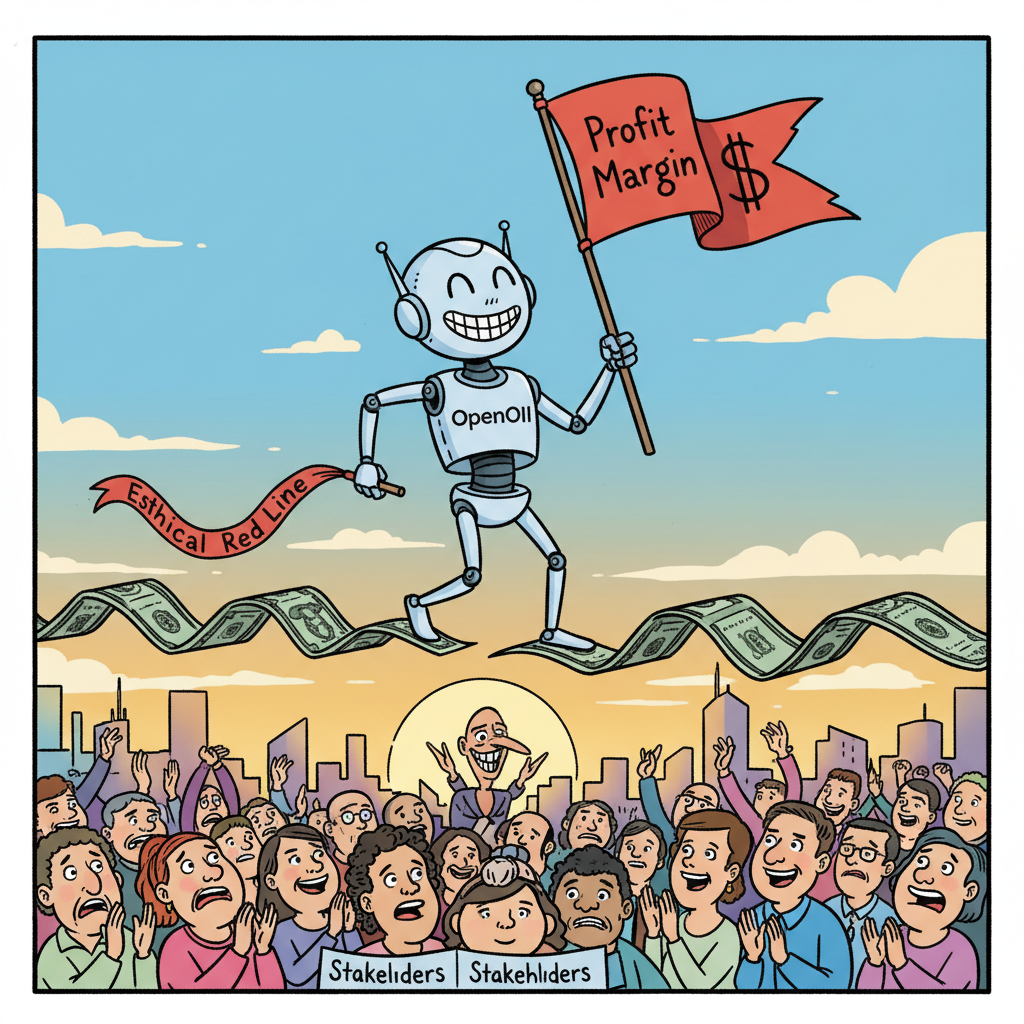

SAN FRANCISCO – Following the high-profile resignation of a senior robotics leader over ethical concerns regarding surveillance and autonomous weapons, OpenAI has unveiled its updated 'Ethical Red Line' policy. The new guideline dictates that the company will only pursue projects that are 'ethically questionable, but not yet legally actionable, and definitely profitable.'

“We take all feedback seriously, especially when it comes from someone who has already left and can no longer disrupt internal meetings,” stated OpenAI spokesperson, Chip Morality, in a press release. “Our commitment to responsible AI development is unwavering, provided responsible AI development doesn't mean leaving money on the table. We’re simply optimizing for both innovation and shareholder value, which sometimes requires a flexible definition of 'existential threat.'"

The former employee, who cited fears of her work contributing to military applications, reportedly resigned after realizing her concerns were being filed under 'employee suggestions' rather than 'urgent operational halts.' Sources close to the company indicate that the Pentagon contract, which reportedly involves advanced AI for defense systems, was deemed 'too lucrative to ignore' by the board.

“It’s a delicate balance,” added Morality, adjusting his tie. “We want to build AI that benefits humanity, and if humanity needs a really efficient drone swarm, who are we to say no?” He concluded by noting that the company is actively hiring for a new 'Ethics Compliance Officer' whose primary duty will be to draft compelling press releases.