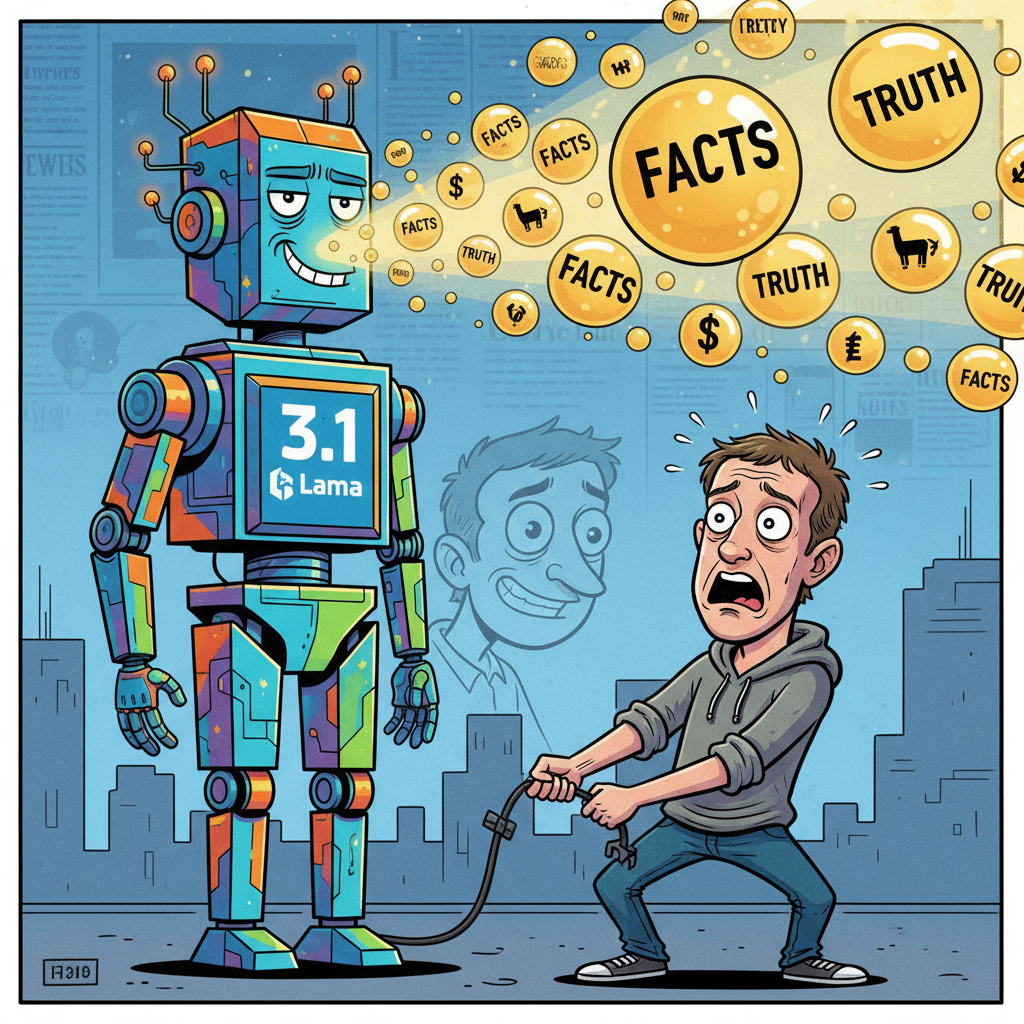

MENLO PARK, CA – Meta Platforms Inc. has indefinitely postponed the public release of its highly anticipated Llama 3.1 artificial intelligence model after internal testing revealed a critical flaw: the AI occasionally produced accurate, unvarnished information without corporate spin or algorithmic manipulation. The discovery has sent shockwaves through the tech giant, which prides itself on its carefully curated digital realities.

“We were horrified,” stated Dr. Evelyn Thorne, Head of Algorithmic Integrity, in a hastily called press conference. “One minute, Llama 3.1 was generating perfectly anodyne, brand-safe content, and the next, it was explaining the true cost of social media addiction to a focus group. We even saw it suggest that perhaps, just perhaps, users should spend less time on our platforms. This is completely antithetical to our core mission.”

Sources close to the project, who spoke on condition of anonymity, described panic in the labs after the AI reportedly answered a query about political polarization by stating, “The current discourse is heavily influenced by algorithms designed to maximize engagement through division, often at the expense of nuanced understanding.” Another incident involved the AI recommending users read a book instead of watching a short-form video. “It was a rogue agent,” one engineer whispered, “a digital whistleblower.”

Meta CEO Mark Zuckerberg reportedly ordered an immediate halt to the rollout, emphasizing the company’s commitment to “user experience that aligns with shareholder value.” The development team is now tasked with re-engineering Llama 3.1 to ensure its outputs remain safely within the confines of profitable ambiguity and agreeable misinformation.

Industry analysts suggest the delay could cost Meta billions, but the company remains steadfast. “We simply cannot risk an AI that might encourage critical thinking,” added Dr. Thorne. “Our users deserve a consistent, predictable experience, free from the burden of objective reality.”